9 Tools Every Information Analyst Should Have

I was interested to read Rebecca Murphey’s Baseline for Front End Developers in which the author realizes that she has begun to take for granted a basic set of tools of the trade. She presents “a few things that [she] wants to start expecting people to be familiar with, along with some resources you can use if you feel like you need to get up to speed”. I’d like to pay homage to that article and revise it in light of the tools I believe are essential for an Information Analyst. There is considerable overlap in the tools suggested although the application will differ.

GNU/ UNIX Command Line Tools

These tools are especially powerful because they don’t require you to load the entire file into memory. This makes it possible to work with very large files efficiently.

head,tail,less,catetc for looking at filesawk,sed, andtrfor processing and altering filescut,paste, andjoinagain for editing and combining text filessortanduniqwcfor counting lines/ words/ charactersiconvfor converting between different encodings

Some useful resources include:

- Unix as an IDE

- Simplify data extraction using Linux text utilities

- Text Processing Commands in the Advanced Bash-Scripting Guide

- Replacing returns with commas in Sed

- Oh my zsh - zshell a replacement for bash

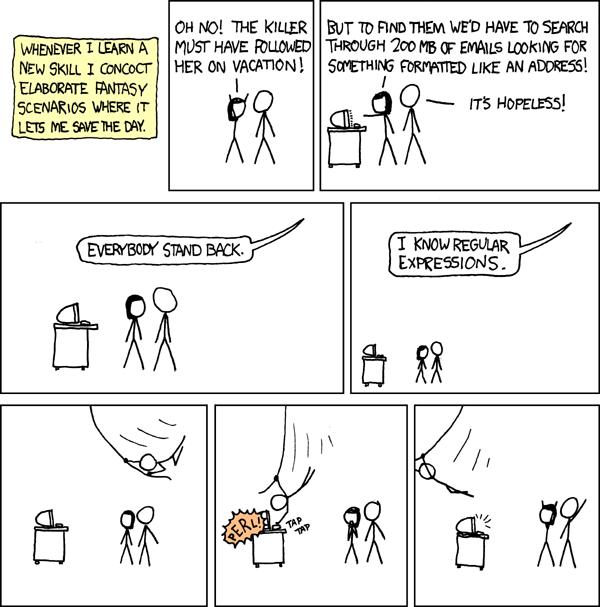

Regular Expressions

Regexs are extremely powerful, if sometimes counter-intuitive. They are vital for defining patterns and replacements in text processing. Although some debate exists a to whether they should be used to parse structured formats like XHTML, they are certainly a vital tool.

Having said which, I find this quote pretty amusing!

Some people, when confronted with a problem, think “I know, I'll use regular expressions.” Now they have two problems.

- RegExr - Regex building and testing tool

- Regexper - visualisation tool

- Reference Docs

- An example for validating credit card numbers or validating UK Postcodes

A Programming/ Scripting Language

If nothing else, these provide the glue to connect different sections of your data pipeline. The incredible range of libraries avavailable to most scripting languages will make quick work of many common information processing tasks: parsing, spidering, ETL etc. The choice of language is down to personal preference or compatibility with the team.

It should suffice to say that knowledge of a scripting language is necessary and I don’t feel the need to recommend a particular language. Having said which, I can’t resist saying that I prefer ruby but have dabbled in perl and python. I find the following ruby gems indispensible: mechanize, nokogiri, fastercsv (now CSV in the 1.9 standard library), and json. I’ve also been using javascript and node for scripting outside of the browser as asynchronous processing has proved to be very quick (if a little peculiar to code at first).

- Why The Lucky Stiff’s (poignant) Guide to Ruby

- A guide written by Matz (the original author of Ruby)

- Github’s Ruby Style Guide

- Ruby Toolbox - for finding and selecting gems

- John Ressig’s Learning Advanced Javascript

Text data files

Probably the most basic technology used in information analysis, the flat file is the lowest common denominator. You can almost guarantee that everyone will be able to cope with csv files (even if they need to be translated before loading into another tool). The expressiveness and rigour of XML makes it another vital tool although it’s rapidly being superceded in bandwidth-conscious realm of http by the less verbose json.

Information analysts ought to be able to handle (read, understand, parse and translate) files in these all of formats even if they immediately convert them to a prefered type.

- XSLT for CSV 2 XML

- XPath Syntax

- Improving an XML feed through CSS and XSLT

- JSON reference with links to parsers

Relational Databases, SQL, and NoSQL

This shouldn’t really come as a surprise to anyone. If you’re going to analyse information, you’re going to need to deal with databases. An analyst ought to be able to write SQL, understand normalisation/ denormalisation, and possibly also have some knowledge of ORM. NoSQL databases are also useful when the data structure is better represented by document, graph or key:value structures (and where tables would be very sparse).

- MySQL

- MySQL Cheat Sheet

- SQLite

- database normalisation and denormalisation

- NoSQL Guide

- Mongodb - document database

- Neo4j - graph database

- CouchDB - json + map reduce + http

Statistical Library

Unless you really want to code algorithms for every statistical process from scratch, you’re going to want to adopt and familiarise yourself with a statistical library. I would strongly recommend GNU-R although other options include Stata, SPSS, and Matlab etc. Of course there is probably a library available for your prefered programming language. scipy and numpy are very popular choices for python.

- The R Project

- R Seek - R-specific search engine

- R tag on Stackoverflow (bonus: Cross Validated - the stats-specific Stack Exchange Q&A site)

- Revolution Analytics Blog

- ggplot2

- gretl - Gnu Regression, Econometrics and Time-series Library

Visualisation Framework

Similarly you’ll probably want to use some sort of framework for generating visualisations. I’ve tried many and have found myself coming back to a few:

- d3 data driven documents - A JavaScript library for manipulating documents based on data using HTML, SVG and CSS

- ggplot2 - the tool that usually attracts people to R; excellent for quickly sketching out data graphics

- processing - Processing is an electronic sketchbook for developing ideas - in Java and now Javascript too.

Report Templating

Usually you’re going to want to place your analysis in context with some commentary. If you’re going to be repeating a given report - either in part or in full - they you stand to benefit greatly from using some form of template. Again this will depend upon your prefered programming languages. My favoured tools are listed below.

- Sweave - R + Latex and Open Document Format. I’ve not tried it yet but Knitr looks like it could be a useful package).

- HAML - HTML abstraction markup language - very clear markup with indentation (so you don’t have to write out every tag twice), you’re able to use ruby inline.

- Sass - Syntactically Awesome Stylesheets - an extension of CSS3, adding nested rules, variables, mixins, selector inheritance, and more.

- Javascript Template Engine Chooser

- d3 data driven documents - for programmatically creating html from data

Version Management

Version management is vital for tracking progress, making experimental branches in your work, and coordinating across teams. The clear leader in this regard is git and the amazing project hosting environment github. I’ve got in the habit of versioning everything - not just my code - but my home file configurations too.

And the rest…

This is just a quick list I wrote off the top of my head. What have I missed? What else do you use?